Enterprise AI maturity is not built by deploying more AI agents. It’s built by deploying the right ones.

A well-chosen use case does more than deliver value; it reduces manual effort, improves process reliability, and creates measurable business ROI early in the AI journey.

A poorly chosen one does the opposite. It increases implementation overhead, creates operational inefficiencies, and delays meaningful business outcomes from AI initiatives.

For decision-makers responsible for enterprise AI outcomes, the difference between momentum and rework starts with use case qualification.

In this article, we break down the conditions that make AI agent–ready use cases, and how to prioritize where to start, along with a free scorecard you can use to evaluate your own use cases.

TABLE OF CONTENTS

- What Is an AI Use Case? And Why Tasks Aren’t Enough

- What Makes a Use Case Agent-Ready

- Top AI Agent Use Cases Examples in 2026

- Get Your AI Use Case Qualification Scorecard

- How to Prioritize When Multiple Use Cases Qualify

- Building the Foundation for Production-Grade AI

- FAQs

What Is an AI Use Case? And Why Tasks Aren’t Enough

Why "Task" Is the Wrong Unit of Measurement

Because task is a single unit of action.

Can AI agent handle our ticket categorization?

Can it automate data entry?

Can it answer employee queries?

These are reasonable starting points.

But tasks are too narrow a frame for enterprise AI decisions.

What is an AI Use Case?

A use case, in the context of enterprise AI, is a specific operational bottleneck within a defined business context with known inputs, a clear output, the right people involved, and consequences tied to business performance.

It is broader than a task (which is a single action) and narrower than a process (which is an entire end-to-end workflow).

Use Case for Compliance Analyst (Insurance)

Summarizing policy renewal documents for internal review.

Needs: precision, auditability, regulatory alignment

Use Case for Claims Agent (Customer Support)

Summarizing a customer dispute for first-response resolution.

Needs: speed, clarity, conversational context

The task is the same. The use case is not. The agent-readiness is not.

A useful way to think about it:

- Task → "Classify this document"

- Use case → "Classify incoming loan application documents by type and route them to the correct processing team within the SLA window, based on applicant segment and product category"

- Process → "End-to-end loan origination"

The shift from thinking in terms of tasks to thinking in terms of use cases is the first adjustment that leads to better AI deployment decisions. It forces teams to consider not just what the agent will do, but where it will operate, who depends on its output, and what happens when it is wrong.

What Makes a Use Case Agent-Ready

There are five conditions that consistently indicate a use case is suitable for AI agent deployment. Not every condition needs to be fully met. But the more gaps that exist, the higher the deployment risk.

Condition 1: The Use Case Has Success Criteria That Exist Before the Agent Does

An AI agent doesn’t intuitively understand quality. For a use case like summarizing customer complaints, an agent needs to know what “good” looks like. It needs a clear definition of success.

- If you say: “Summarize this document” → that’s vague

- If you say: “Summarize in 5 bullet points, focusing on risks and action items” → now success is defined

In actual enterprise environment, “good output” is often not consistent:

- Sales team → wants concise, persuasive summaries

- Compliance team → wants detailed, risk-heavy summaries

- Support team → wants customer-friendly language

If these expectations aren’t explicitly defined, the agent has no stable target.

A lot of work relies on implicit human judgment, like:

- “Does this sound professional enough?”

- “Is this risky to send?”

- “Is this insight actually useful?”

If these aren’t turned into rules, examples, or guidelines, the agent can’t replicate them.

Teams assume the agent will “figure it out”

Task: summarize a customer complaint

- Follow instructions correctly

- Produce grammatically perfect output

- Complete the task end-to-end

What the business needs

- Flags risks

- Matches team expectations

- Actionable insight

Completion ≠ Correctness

The diagnostic question: can your team write down, in specific terms, what a correct output looks like — before the agent is built? If that conversation surfaces disagreement across functions, the use case needs more internal alignment before it needs an agent.

Condition 2: The Use Case Runs on Inputs That Are Already Structured

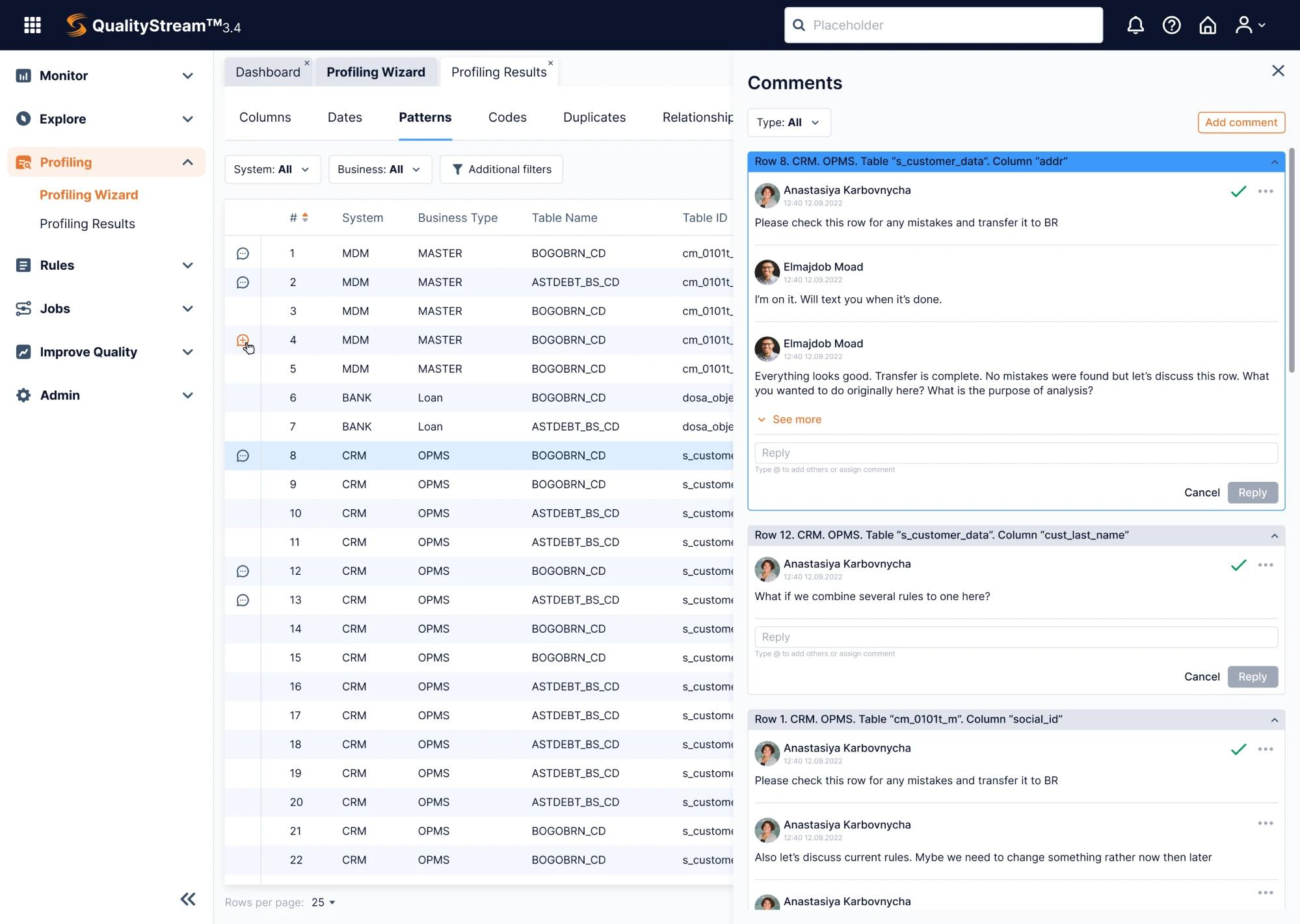

Consider a use case where an agent is expected to review customer data and flag issues.

In the example above, the data is organized into rows and tables but the actual context lives in comments, back-and-forth discussions, and implicit assumptions. This is where AI agents struggle.

When inputs are truly structured, everything is clearly labeled, consistently formatted or easy to locate and process.

Think in terms of form fields (Name, Email, Issue Type), CRM records (deal stage, customer history) or standard templates (repeatable structure).

In these cases, the agent knows:

- what each field means

- where to look

- how to act

There’s little ambiguity and so execution is reliable.

The diagnostic question: what percentage of the inputs this use case requires come from structured system fields versus human-interpreted sources? That ratio is a rough but useful proxy for agent readiness.

Condition 3: The Use Case Produces Errors That Are Visible and Manageable

This is one of the most underweighted factors in enterprise AI evaluations.

Before deploying an agent on any use case, the question you ask should be: if the AI agent gets this wrong, how quickly will someone notice and what does that cost?

Consider a use case like sending appointment reminders based on scheduling data. If the wrong time is sent, the error is visible almost immediately. It can be corrected within hours, and the impact is limited.

Now contrast that with a use case like generating summaries that feed into decision-making. If the output is wrong, the error may not be obvious. It can quietly influence downstream actions before anyone notices.

| Use case type | Should you agentify? |

|---|---|

| High volume + low consequence | ✅ Strong candidate |

| Low volume + high consequence | ⚠️ Be cautious |

| High consequence + low visibility | ❌ Not agent-ready |

The diagnostic question: If this use case produces a wrong output today, who catches it, how long does it take, and what does fixing it cost the business?

Condition 4: The Use Case Is Holding Up Work That Matters

Right now, teams often pick use cases like: reminders, summaries or small admin tasks. Because they’re simple and safe. But those usually don’t change outcomes. They just make individual tasks faster.

Imagine a pipeline for human agent performing claims processing: Input → Review → Routing → Execution → Outcome

If Review is slow, this step is the bottleneck that impact the business bottomline.

What “bottleneck” means here

- Work gets stuck

- People are waiting

- Everything downstream is delayed

The highest-value AI agent deployments remove friction from the critical path. This means focusing on steps that control the speed of the entire workflow.

The diagnostic question: if this use case were no longer dependent on human processing, what downstream work would move faster — and by how much?

Condition 5: The Use Case Lives Inside Systems the Agent Can Actually Access

For an agent to complete a task, it needs to:

- Get the data (read access)

- Understand it (structured or processable)

- Take action (write/update/send)

If any of these are blocked → the use case breaks.

AI agents require API access, clean data pipelines, and defined permission frameworks.

If the use case spans multiple systems without clear integration points, or requires navigating tools with irregular interfaces, the technical complexity often outweighs the operational benefit.

The diagnostic question: can you list every system the agent would need to touch, and does each one have a usable API? If that answer takes much time to produce, the integration groundwork is not yet in place.

What the five conditions have in common

None of them are about the technology. They are about the use case — how well-defined it is, how structured its inputs are, how visible its errors are, how much friction it creates, and how accessible its systems are.

An agent deployed into a use case that clears all five conditions has a credible path from pilot to production.

The use cases that reach production and stay there share a common profile. They are narrow in scope, high in input structure, and low in error consequence. The success condition is describable. The inputs are accessible. The error is detectable.

How Gyde fulfills these conditions

Gyde is an AI transformation partner that builds Specific Intelligence Systems (SIS). These AI systems are narrowly scoped to one defined use case, deeply integrated with your data and policy constraints, and designed with validation layers. Every deployment starts with a use case qualification step, led by AI specialists (POD).

Learn more about SIS →Top AI Agent Use Cases Examples in 2026

Here is what that looks like across the industries where enterprise AI agent deployment is most active.

AI Agent Use Cases in BFSI

1. KYC and AML document verification

According to Fenergo's 2025 global survey of 800 financial institutions, the average firm spends $72.9 million annually on KYC and AML operations and 70% lost clients in the past year due to slow or inefficient onboarding.

That is the reason KYC and AML document verification in financial services is one of the strongest AI agent use cases available today.

The document types are defined, the verification criteria are codified in regulation, and the output (verified or flagged for review) is binary enough for an agent to handle reliably. A wrong output is detectable within the same processing cycle, and human review of flagged cases is already built into the compliance workflow.

That scale of manual overhead is precisely where structured agent deployment creates measurable relief.

2. Loan underwriting support

AI-assisted loan underwriting support qualifies well as an augmentation use case. The agent surfaces relevant applicant data, flags risk signals, and prepares a structured summary for the underwriter to review.

The judgment stays with the specialist. The preparation work (which currently consumes a disproportionate share of underwriter time) does not.

3. Regulatory tracking

Automated regulatory change tracking works here because the input structure is high and the error consequence is visible. Regulatory changes are published in structured formats.

An agent that monitors sources, classifies updates by relevance, and routes them to the correct function removes a significant manual overhead from compliance teams without touching the interpretation or response decisions that require human judgment.

4. Marketing compliance checking

Outbound marketing compliance checking involves verifying that financial communications meet regulatory language requirements before they are sent.

The rules are documented, the inputs are text-based and consistent, and a flagged output goes to a human reviewer before anything reaches a customer.

AI Agent Use Cases in Healthcare

1. Medical coding

CMS's 2025 Medicare Fee-for-Service data found an overall improper payment rate of 6.55%, representing more than $28 billion in incorrectly processed claims.

That's why AI-assisted medical coding is one of the most clearly agent-ready use cases in healthcare operations.

ICD-10 codes are a structured classification system. Clinical notes, when they follow documentation standards, are consistent enough in format for an agent to extract the relevant procedure and diagnosis information and suggest the correct codes for coder review.

Error detectability is high — coders review every output before submission.

The volume and consistency of clinical documentation make this a strong candidate for agent-assisted review.

2. Claims processing automation

Healthcare claims processing automation qualifies when claims follow a standard format and validation rules are well-defined.

An agent that checks claims against payer requirements, flags missing fields, and routes clean claims for submission removes a high-volume manual step without touching the adjudication decisions that require clinical judgment.

3. Appointment scheduling and pre-visit communication

Automated appointment scheduling and pre-visit communication works because the trigger is a confirmed booking in the scheduling system and the output is a standardized patient communication.

The inputs are structured, the success criteria are clear, and the error scenario (a wrong appointment time or instruction set) is visible and correctable before it causes downstream harm.

4. Pharmacy stock prediction

Pharmacy stock prediction is a use case where the cost of getting it wrong is immediate and visible. A stockout on a critical medication is a patient safety event.

Consumption data, order history, and formulary requirements are all system-resident and structured. The agent doesn't need to interpret anything ambiguous, instead, it compares what's being used against what's available, models near-term demand, and flags replenishment needs before the gap becomes a problem.

The decision to reorder stays with the pharmacy team. The monitoring burden does not.

AI Agent Use Cases in Retail

1. Inventory forecasting and out-of-stock alerting

Retail inventory forecasting and out-of-stock alerting is a use case where the logic is rule-based, the inputs are machine-readable, and the output is a flag for human action rather than an autonomous decision.

An agent comparing stock-on-hand data against sales velocity and flagging variances above a defined threshold operates entirely within structured system data and produces outputs that are immediately verifiable.

2. SKU categorization

Automated SKU categorization works because product attributes are structured, the categorization taxonomy is defined, and a miscategorized SKU is detectable during the next catalog review cycle.

The volume is high enough that agent deployment creates meaningful capacity relief for merchandising teams.

3. Customer complaint analysis

AI-powered customer complaint analysis and routing involves classifying incoming complaints by type, sentiment, and urgency and directing them to the correct resolution team.

The output is a routing decision. Human judgment stays at the point where it matters.

4. Return risk prediction

E-commerce return risk prediction is a use case where historical transaction data, product category, and customer behavior patterns are all system-resident and structured.

An agent that scores return probability at the point of purchase gives operations teams an early signal without requiring them to act on every output — the threshold for human review can be calibrated to the team's capacity.

What these use cases have in common

None of them hand the final decision to the agent. In every case, the agent operates on structured inputs, produces a defined output (a classification, a flag, a summary, a draft) and passes that output to a human or a downstream system where the consequence of error is manageable.

It is the design principle. Agent deployment works best when the agent handles the preparation and the human handles the determination. The use cases are defined and that distinction is what makes them deployable.

Get Your AI Use Case Qualification Scorecard

If you have a use case in mind, run it through this scorecard as you go.

One thing worth saying before you do: it's the organizational knowledge that sits with you that actually decides whether a use case is deployable.

You know which workflows are actually broken, which errors your team quietly absorbs every quarter, and where expert capacity is being spent on things it shouldn't be.

The scorecard below just gives that knowledge structure.

How to Prioritize When Multiple Use Cases Qualify

It’s normal to have multiple “qualified” AI agent use cases. The real decision is which one to start with.

Your first use case is not just about value. It builds:

- Integration groundwork → makes future deployments faster

- Audit trail → gives compliance/legal confidence

- Stakeholder trust → unlocks internal buy-in

Don’t pick the most impressive. Pick the most defensible.

Prioritize a use case that:

- Passes all 5 conditions (not just most)

- Has low integration complexity

- Can reach production cleanly

- Performs reliably from day one

- Produces clear, shareable outcomes

In short:

- Start small, but complete: A narrower use case that works end-to-end beats a bigger one with gaps.

- Build proof: Use the first deployment to create evidence: performance, reliability, adoption.

- Then scale up: Move to use cases with more complexity, higher stakes or broader organizational impact

Building the Foundation for Production-Grade AI

You came into this blog with a use case in mind, or a list of them.

By now you have a clearer sense of which ones are ready, which ones need more internal alignment before they need an agent, and which ones are genuinely not agent territory regardless of how often they come up in planning conversations.

That clarity is the most valuable output of the qualification process.

That's the clarity Gyde brings to the table before anything gets built. Gyde's Specific Intelligence Systems are designed for AI agent use cases that pass that test: narrow in scope, deeply integrated with your data and policy constraints, and built with the validation layers that production use actually requires.

Not broad automation. One defined problem, solved completely, with the governance and reliability that enterprise operations depend on.

The first deployment that works end-to-end (reliably, auditably, without constant intervention) is what makes the second one easier to justify. That's how AI stops being a pilot and starts becoming infrastructure.

FAQs

1. What are examples of AI agent use cases that fail in enterprises?

AI agent projects often fail when applied to low-volume, high-risk decisions (like legal approvals or financial sign-offs) or when inputs are scattered across emails, documents, and conversations instead of structured systems. These use cases create ambiguity that agents cannot reliably handle.

2. How do you measure ROI from AI agent implementation?

ROI from AI agents is typically measured through cycle time reduction, decrease in manual effort, error rate improvement, and increased throughput. In enterprise settings, impact is often seen in faster decision-making and improved operational efficiency rather than direct cost savings alone.

3. Do AI agents replace employees or augment their work?

In most enterprise deployments, AI agents augment human work rather than replace it. They handle preparation tasks like data extraction, classification, or summarization, while humans retain control over final decisions—especially in high-stakes scenarios.

4. What technical infrastructure is required to deploy AI agents in enterprises?

Successful AI agent deployment requires API-accessible systems, clean and structured data pipelines, permission frameworks, and monitoring mechanisms. Without this foundation, even well-defined use cases struggle to move from pilot to production.

5. How long does it take to implement an AI agent use case?

Implementation timelines vary based on complexity, but well-scoped enterprise AI agent use cases can typically move from qualification to production in a few weeks to a few months. Faster deployments usually involve narrowly defined problems with existing data and integrations in place.

![How to Identify Enterprise AI Agent Use Cases [2026 Guide]](/content/images/size/w2000/2026/05/How-to-Spot-Enterprise-Use-Cases_That-Need-an-AI-Agent.jpg)