Forward Deployed Engineer roles have exploded in demand. According to Financial Times, hiring interest has grown 800% since January 2025. Yet for a role gaining so much attention, it remains widely misunderstood.

"What does an FDE actually do?"

"Is it consulting in disguise?"

"How is it different from solutions engineering or technical delivery?"

This confusion exists across the market, right from engineers considering the role, to companies hiring for it, to current FDEs defining what excellence looks like.

This blog explains what Forward Deployed Engineers really do, where they create value today, and how organizations can deploy them effectively.

TABLE OF CONTENTS

- Why Enterprises Created the Forward Deployed Engineer

- What a Forward Deployed Engineer Actually Is

- How Enterprises Deploy FDEs Today

- Where FDEs Create Measurable Value

- The FDE Performance Gap

- How Gyde Addresses This Gap

- FDE vs. AI Delivery POD: A Side-by-Side Comparison

- End Note

- Frequently Asked Questions

Why Enterprises Created the Forward Deployed Engineer

Enterprise customers weren't failing to adopt Palantir's software due to product inadequacy. Instead the issue was internal systems, data environments, and organisational workflows which were far more complex than any external team could anticipate from the outside.

Palantir's FDEs didn’t ship from headquarters but were embedded inside a customer’s environment and build against that complexity from the inside. They were not consultants who advised. They operated end-to-end.

Since the rise in AI transformation, getting a capable AI system or agent to really function inside a specific organization's data environment, compliance constraints, and operational workflows is harder than just building.

That is precisely the gap Forward Deployed Engineering (FDE) is designed to close: the gap between AI pilots and AI in production.

Looking at evidence by MIT research: 95% of enterprise AI pilots produce zero measurable return.

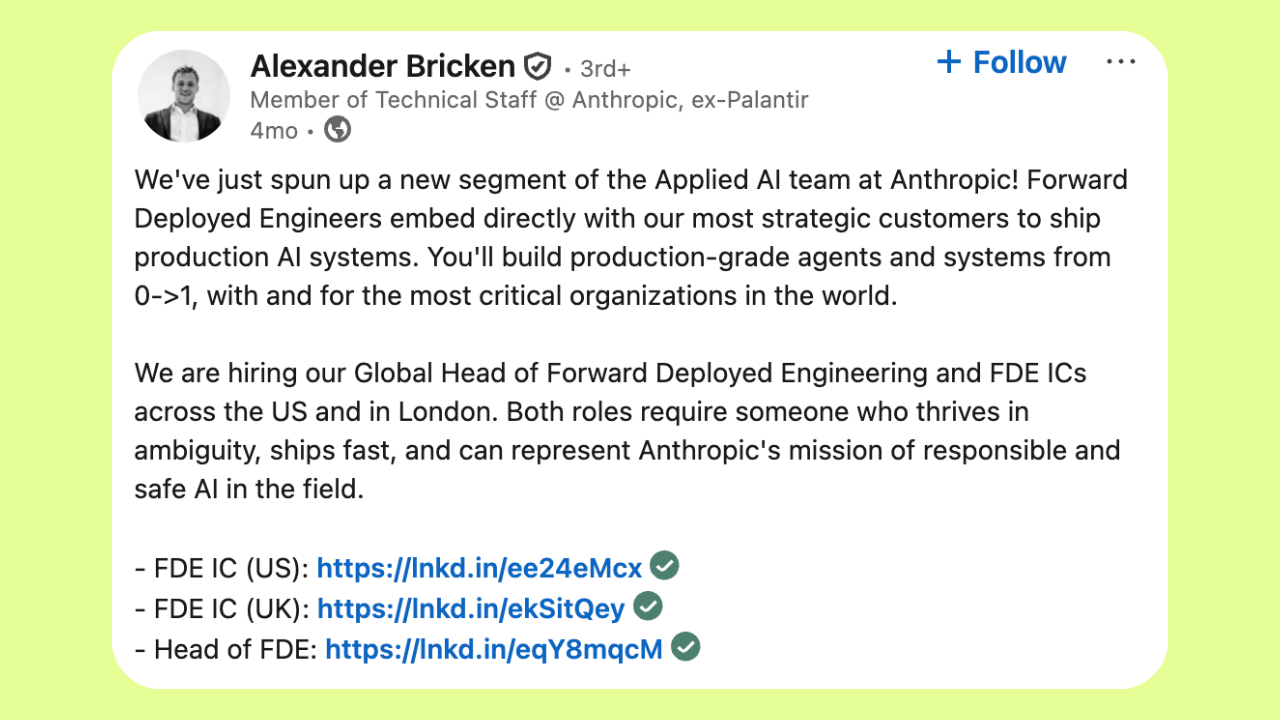

As a result, we saw OpenAI formalise its FDE practice in 2024.

Anthropic, Scale AI, Databricks, and Salesforce followed.

In March 2026, Accenture launched a dedicated forward deployed engineering practice in partnership with Microsoft to help organisations scale AI across enterprise environments.

The spread of the role shows that the last-mile AI implementation problem has not been solved by simpler approaches such as self-serve software, standard onboarding, or remote support.

What Is a Forward Deployed Engineer?

A forward deployed engineer is a technical specialist who embeds within a customer's operating environment to implement, integrate, and operationalise AI systems/agents in production.

FDEs operate across a range that typical engineering positions do not. It entails pre-sales technical scoping, post-sales implementation, system integration, workflow configuration, evaluation and monitoring, and ongoing iteration.

FDE Engagement Lifecycle

Discovery

Problem Definition

Integration Design

Build

Evaluation Framework

Deploy

Monitoring

Knowledge Transfer

More often, these are simultaneous and often in environments with legacy infrastructure and regulatory constraints. Palantir described the responsibility set plainly: "FDEs work in small teams and own end-to-end execution of high-stakes projects."

The role has also acquired adjacent names across companies. Some call them Forward Deployed AI Engineers. Some companies use Solutions Architects or Technical Delivery Engineers for overlapping responsibilities.

What differentiates the FDE is that they work directly in the customer environment and contribute learnings back to the product their company sells — closing the loop between field reality and product development.

How Enterprises Deploy FDEs Today

There is no single FDE deployment model. In practice, enterprises and AI vendors use several configurations, each with different trade-offs.

1. Vendor-Embedded FDE (Palantir, OpenAI, Scale AI model)

The AI vendor sends one or more FDEs directly into the customer environment for the duration of the implementation. With deep product knowledge and direct access to the vendor’s engineering teams, the FDE can quickly escalate issues—especially when customer needs expose product limitations.

OpenAI's FDE practice grew from two engineers at the start of 2024 to 39 by end of year. Across deployments in financial services, manufacturing, and telecommunications, the team documented 20–50% efficiency improvements. At Morgan Stanley, a deployed AI assistant reached a 98% adoption rate.

The vendor-embedded model works best when the customer's use case is new or hasn’t been implemented before in that organization, the implementation complexity is high, and speed-to-production matters more than internal capability building.

2. Rotational FDE (Consulting firm model)

Large consulting firms (like Accenture, Deloitte, IBM) deploy FDE-equivalent roles that rotate across multiple client engagements. The engineer gains breadth across industries and use cases but typically less depth in any single customer's operational context.

This model suits enterprises that need implementation support across multiple concurrent AI programmes rather than deep embedding in one.

3. Internal FDE (Enterprise-built capability)

Some enterprises hire FDE-equivalent talent internally — engineers whose primary role is to take AI models and vendor platforms and make them work inside the organisation's specific environment. This is common in large financial institutions and technology companies with significant AI investment.

The internal model builds proprietary delivery capability but requires finding and retaining a profile that is rare: engineers who combine technical depth with business acumen and comfort operating in organisational complexity.

4. POD-based delivery (Structured team model)

To break the cycle of person-dependency, leading organizations are moving away from the "lone wolf" FDE. Instead, they embed cross-functional AI Delivery PODs that integrate engineering, domain expertise, and governance.

(This is the model Gyde operates on, covered in detail below.)

This team-based approach changes what gets built. While a solo engineer might spend their time on fragmented data fixes, a POD is designed to architect a cohesive, production-grade environment.

Where FDEs Create Measurable Value

Given the following four aspects match your enterprise reality, the FDE model produces clear results in identifiable conditions.

When Enterprises Need Forward Deployed Engineers

Complex Technical Environment

Legacy infrastructure, fragmented data sources, and workflows that cannot be paused during implementation.

Highly Specific Use Case

The AI deployment is domain-specific enough that generic rollout playbooks do not work.

Large Product-to-Reality Gap

There is a wide gap between the vendor’s product capabilities and the customer’s operational context.

Need for Fast Results

The customer needs a working system quickly, but internal capacity to build it does not exist.

Let's take a real-world example from Salesforce's FDE practice.

When a reservation platform's AI agent began failing (data sync issues between the Agentforce knowledge library and Data 360) a Salesforce FDE team resolved every pending issue within a week.

Without embedded access and vendor-side product knowledge, the same issues would have sat across standard escalation queues for significantly longer.

The pattern across well-documented FDE engagements is consistent: embedded engineers who understand both the product and the customer's operating reality close the last-mile gap faster than any other delivery model currently available.

The FDE Performance Gap: Why Individual Placement Isn’t Building Capability

The case for embedded delivery is well-established, yet many enterprise leaders find that simply "placing" an Forward Deployed Engineer (FDE) doesn't yield the expected ROI.

This gap exists because most enterprises are operating with incomplete representations of how work actually happens.

Most organizations define work through documented workflows (SOPs, process maps, and system-defined steps) that describe how work should happen.

The friction occurs because the challenges are both operational (how they work today) and structural (how the organization scales tomorrow).

1. FDEs Are Often Away From Decisions

FDEs are frequently sidelined from the very environments where they could be most effective.

- Operational Friction: FDEs are often not embedded where decisions actually happen (e.g., remote vs. on-ground with business teams). By missing critical customer workshops and decision flows, they lack the real-time execution context needed to build relevant solutions.

- Knowledge Silos: Consequently, the understanding of a customer's workflows, edge cases, and business logic remains undocumented and tied to the individual engineer (FDE). When that engineer rotates off, that institutional knowledge goes with them, shifting the dependency from the system to the person.

2. Important Knowledge Stays With One Person

Instead of engineering high-level solutions, FDEs often become the "glue" holding fragile processes together.

- Operational Friction: They frequently get pulled into low-level data integration and debugging rather than driving business outcomes.

- Structural Risk: When a role fills a reliability gap, the system never becomes self-sustaining; the gap stays person-dependent and fails to shrink over time.

3. FDEs Spend Time Fixing Small Problems

The current model relies on "heroics" rather than a repeatable process.

- Efficiency Drain: Because FDEs are bogged down in troubleshooting and disconnected from the core business flow, one engineer can typically only manage one or two deployments at meaningful depth.

- Widening Gap: As AI use cases multiply across compliance, marketing, and customer service, the only response is to add more FDEs. However, with 50% of organizations still lacking adequate internal AI/ML expertise (World Quality Report 2025), demand is growing much faster than the supply of qualified engineers.

How Gyde Addresses This Gap

The AI POD is Gyde's structured alternative to individual embedded delivery that is designed to solve the same pilot-to-production gap, but in a scalable and repeatable way.

Each POD is a five-person team that operates as an extension of the client organisation for the duration of an engagement.

Product Manager

Requirements &

stakeholder alignment

2 AI Engineers

Model development &

integration

AI Governance Engineer

Compliance & security

oversight

Deployment Specialist

Production deployment &

monitoring

DevOps + Data Engineer

Optional support as

needed

The critical design decision is where intelligence lands. In an individually-focused FDE model, intelligence accumulates in the engineer's experience. In the POD model, intelligence is built into the system (the workflow logic, the guardrails, the retrieval architecture, the monitoring and audit layer) from the start.

How the four-week cycle works:

Week 1: Discovery and Alignment. The Product Manager works with the client's stakeholders to define the use case, success metrics, and technical requirements. AI Engineers assess data availability and integration points. The use case is scoped tightly to one business bottleneck, with measurable outcomes defined before build begins.

Week 2: Build and Iterate. AI Engineers develop the solution using pre-built workflows or custom development. Daily feedback loops keep the build aligned with operational reality rather than a requirements document that may not reflect how work actually happens.

Week 3: Governance and Testing. The AI Governance Engineer validates compliance, security, and guardrails before any production deployment. End-to-end testing is conducted in the client's actual environment..

Week 4: Deploy and Monitor. The Deployment Specialist takes the solution to production, configures monitoring and alerting, and transfers operational knowledge to the client team. The organisation's dependency is on the system's capability, not on a named engineer staying engaged.

Gyde has structured team model (POD delivery model) that build complete production system (also called SIS), built against the enterprise's data, context and constraints.

Because the architecture is reusable (connectors, guardrails, governance framework) each subsequent use case deploys faster than the last. Enterprises that start with a single sprint have an architecture they can extend, not a one-off delivery they need to rebuild from scratch.

Difference Between Forward Deployed Engineering Model and Gyde AI POD Delivery Model

| Dimension | Forward Deployed Engineer | Gyde AI Delivery POD |

|---|---|---|

| Team structure | Individual engineer (sometimes a small team) | 5-person cross-functional unit: PM, 2 AI Engineers, AI Governance Engineer, Deployment Specialist |

| Delivery scope | Use-case-driven scope that varies based on enterprise systems, data, and constraints | Standardised system-level scope covering build, governance, deployment, and lifecycle for scalable reuse |

| Governance | Varies by engagement; often handled separately | AI Governance Engineer embedded in every POD as standard |

| Timeline | Variable; often months to production | One production-ready AI system per 4-week sprint |

| Knowledge transfer | Accumulated in the engineer; transferred at end of engagement | Built into the system continuously; structured handover to client team |

| Scalability | Scales linearly with headcount (one FDE per use case) | Add PODs in parallel; architecture is reusable across use cases |

| Domain depth | Depends on individual's prior experience | Domain knowledge scoped and validated in Week 1 discovery |

| Post-deployment | Monitoring and iteration varies by contract | Deployment Specialist handles monitoring, alerting, and ongoing optimisation |

| Enterprise tooling | Integrates with existing systems where possible | Embedded directly in existing workflows |

| Cost model | Individual placement or consulting day rates | Structured sprints: Single Sprint, Quarterly Retainer, or Multi-POD Scale |

AI PODs include forward deployed engineering capability, but extend it into a structured, cross-functional delivery model.

End Note

Forward deployed engineers exist because the enterprise AI implementation gap is significant. They help move AI from pilots to production.

But three limitations persist:

- Structure: Without clear processes, roles, and repeatable playbooks, embedded engineering becomes expensive improvisation—effective once, but hard to repeat.

- Scalability: One FDE per deployment means headcount grows linearly with use cases.

- Knowledge retention: When process knowledge and decisions remain tied to individuals rather than systems, organizations cannot sustain or extend what was built.

This is the question enterprises must answer: Can your delivery model turn into repeatable capability?

Gyde’s AI POD model is built for that outcome. It is cross functional by design, system first by default, and structured to make each subsequent deployment faster than the last.

FAQs

What skill matters most for a forward deployed engineer?

The ability to diagnose the real problem before building anything. The most effective FDEs are best at pattern recognition. Identifying what is actually blocking a workflow, rather than what the client says is blocking it, determines whether the final system works in production.

What is process debt in enterprise AI?

Process debt is the gap between how an organisation documents its workflows and how work actually happens. Formal processes cover the expected path. Exceptions, workarounds, and edge cases live in employees' heads which are never written down.

AI systems configured against documented processes fail on the undocumented ones, which in most enterprises represent the majority of real operational complexity.

Why do enterprise AI pilots fail to reach production?

Because the architecture built to prove feasibility rarely holds at scale. A proof of concept is optimised to demonstrate capability under controlled conditions. Production introduces edge cases, inconsistent inputs, and volume that expose every assumption the prototype made. Most enterprise AI timelines underestimate this gap and stall there.

Should FDEs feed insights back to the product team?

Yes. Most organisations treat this as optional when it is structural. When an FDE encounters the same gap across multiple clients, it is a product gap, not a one-off integration issue. Organisations that capture this signal compound their delivery capability over time.

Those that treat FDEs as pure delivery functions spend on implementation without building any institutional intelligence.

What makes an FDE engagement economically sustainable?

Delivery speed compounding over time. An FDE practice where the 50th deployment takes as long as the 10th is a headcount-scaling problem, not a delivery model.

Sustainable FDE economics require reusable architecture, documented patterns, and tooling that reduces time-to-value on each subsequent use case, so the cost of delivery falls as the number of deployments grows.